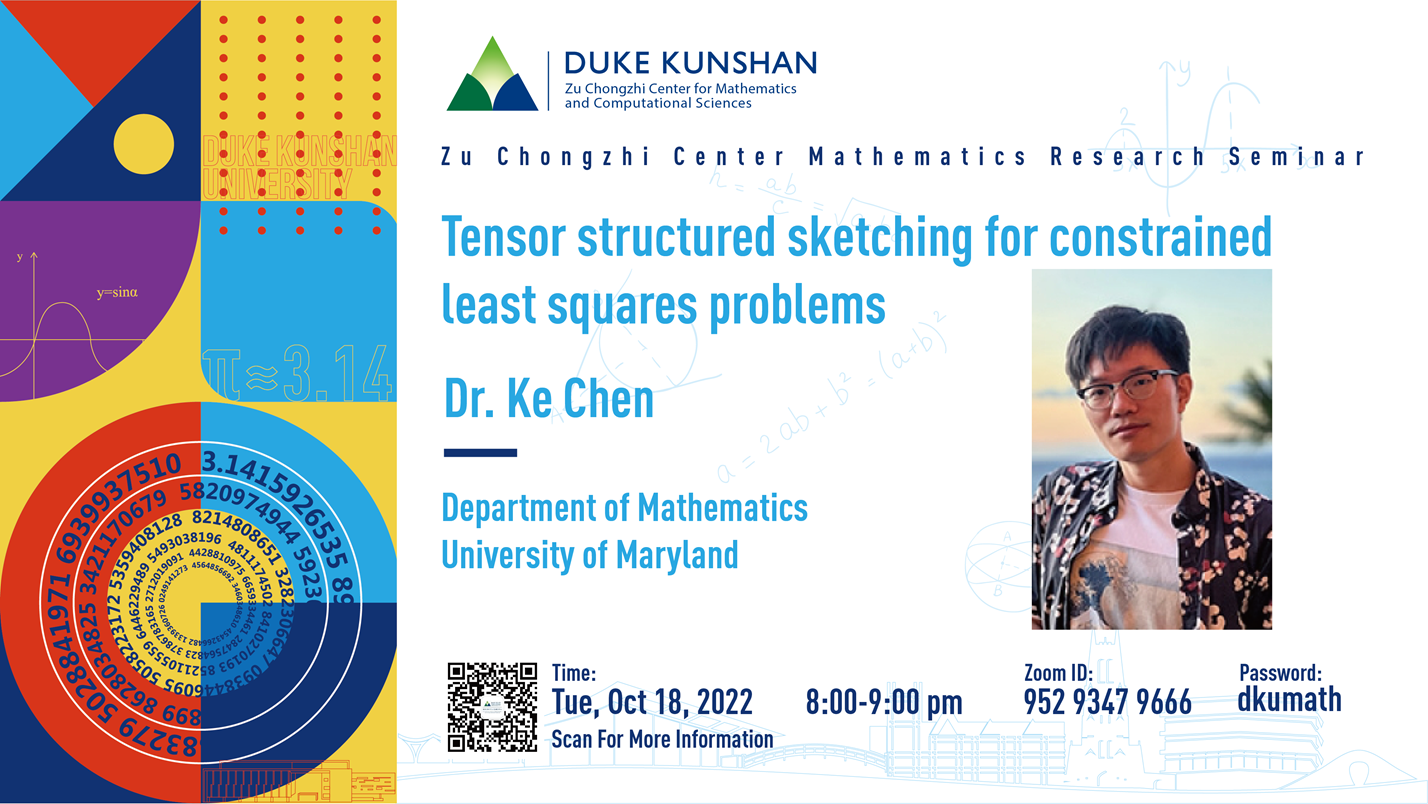

Zu Chongzhi Center Mathematics Research Seminar

Date and Time (China standard time): Tuesday, October 18, 8:00-9:00pm

Zoom ID: 952 9347 9666

Passcode: dkumath

Title: Tensor structured sketching for constrained least squares problems

Speaker: Ke Chen

Bio: Ke Chen got his Ph.D. from the University of Wisconsin-Madison, and he is currently a postdoc at the University of Maryland. His research interests are randomized methods for scientific machine learning.

Abstract: Constrained least squares problems arise in many applications. The memory and computation costs are usually expensive with high-dimensional input data. We employ the so-called “sketching” strategy to project the least squares problem into a space of a lower “sketching dimension” via a random sketching matrix. The key idea of sketching is to reduce the dimension of the problem as much as possible while maintaining the approximation accuracy. In this talk, we will focus on least square problems with tensor data +matrix. Such structure is often present in linearized inverse PDE problems and tensor decomposition optimizations. To match with the tensor structures of the problem, we utilize a general class of row-wise tensorized sub-Gaussian matrices as sketching matrices. We provide theoretical guarantees on the sketching dimension in terms of error criterion and probability failure rate. For unconstrained linear regressions, we obtain an optimal estimate for the sketching dimension. For optimization problems with general constraint sets, we show that the sketching dimension depends on a statistical complexity that characterizes the geometry of the underlying problems. Our theories are demonstrated and validated in a few concrete examples, including unconstrained linear regression and sparse recovery problems.